In this series, we’re going to go over the Quality Assurance journey we’ve been on here in the Client Side Integrations (CSI) team at Tessian. Most of this post will be using our experience with the Outlook Add-in, as that’s the piece of software most used by our clients. But the philosophies and learnings here apply to most software in general (regardless of where it’s run) with an emphasis on software that includes a UI.

I’ll admit that the onus for this work was me sitting in my home office Saturday morning knowing that I’d have to start manual testing for an upcoming release in the next two weeks and just not being able to convince myself to click the “Send” button in Outlook after typing a subject of “Hello world” one more time… But once you start automating UI tests, it just builds on itself and you start being able to construct new tests from existing code. It can be such a euphoric experience. If you’ve ever dreaded (or are currently dreading) running through manual QA tests, keep reading and see if you can implement some of the solutions we have.

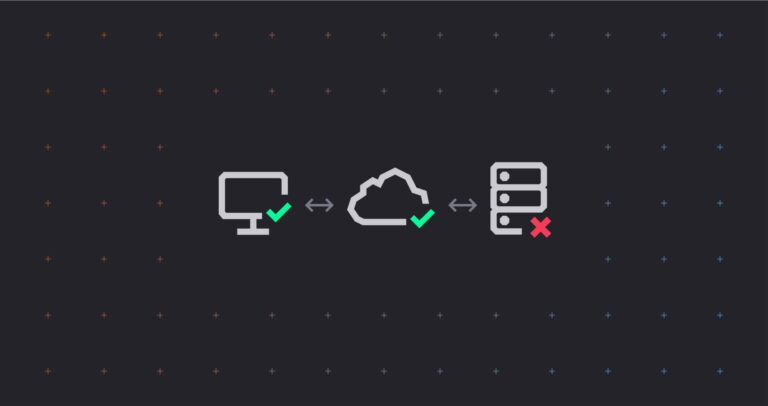

Why QA in the Outlook Add-in Ecosystem is Hard

The Outlook Add-in was the first piece of software written to run on our clients’ computers and, as a result of this, alongside needing to work in some of the oldest software Microsoft develops (Outlook), there are challenges when it comes to QA. These challenges include:

- Detecting faults in the add-in itself

- Detecting changes in Outlook which may result in functionality loss of our add-in

- Detecting changes in Windows that may result in performance issues of our add-in

- Testing the myriad of environments our add-in will be installed in

The last point is the hardest to QA, as even a list of just a subset of the different configurations of Outlook shows the permutations of test environments just doesn’t scale well:

- Outlook’s Online vs Cached mode

- Outlook edition: 2010, 2013, 2016, 2019 perpetual license, 2019 volume license, M365 with its 5 update channels…

- Connected to On-Premise Exchange Vs Exchange Online/M365

- Other add-ins in Outlook

- Third-party Exchange add-ins (Retention software, auditing, archiving, etc…)

And now add non-Outlook related environment issues we’ve had to work through:

- Network proxies, VPNs, Endpoint protection

- Virus scanning software

- Windows versions

One can see how it would be impossible to predict all the environmental configurations and validate our add-in functionality before releasing it.

A Brief History of QA in Client Side Integrations (CSI)

As many companies do – we started our QA journey with a QA team. This was a set of individuals whose full time job was to install the latest commit of our add-in and test its functionality. This quickly grew where this team was managing/sharing VMs to ensure we worked on all those permutations above. They also worked hard to try and emulate the external factors of our clients’ environments like proxy servers, weak Internet connections, etc… This model works well for exploratory testing and finding strange edge cases, but where it doesn’t work well or scale well, is around communication (the person seeing the bug isn’t the person fixing the bug) and automation (every release takes more and more person-power as the list of regression issues gets longer and longer).

In 2020 Andy Smith, our Head of Engineering, made a commitment that all QA in Tessian would be performed by Developers. This had a large impact on the CSI team as we test an external application (Outlook) across many different versions and configurations which can affect its UI. So CSI set out a three phase approach for the Development team to absorb the QA processes. (Watch how good we are at naming things in my team.)

Short-Term

The basic goal here was that the Developers would run through the same steps and processes that were already defined for our QA. This meant a lot of manual testing, manually configuring environments, etc. The biggest learning from our team during this phase was that there needs to be a Developer on an overview board whenever you have a QA department to ensure that test steps actually test the thing you want. We found many instances where an assumption in a test step was made that was incorrect or didn’t fully test something.

Medium-Term

The idea here was that once the Developers are familiar and comfortable running through the processes defined by the QA department, we would then take over ownership of the actual tests themselves and make edits. Often these edits resulted in the ability to test a functionality with more accuracy or less steps. It also included the ability to stand up an environment that tests more permutations, etc. The ownership of the actual tests also meant that as we changed the steps, we needed to do it with automation in mind.

Long-Term

Automation. Whether it’s unit, integration, or UI tests, we need them automated. Let a computer run the same test over and over again let the Developers think of ever increasing complexity of what and how we test.

Our QA Philosophy

Because it would be impossible for us to test every permutation of potential clients’ environments before we release our software (or even an existing client’s environment), we approach our QA with the following philosophies:

Software Engineers are in the Best Position to Perform QA

This means that the people responsible for developing the feature or bug, are the best people when it comes to writing the test cases needed to validate the change, add those test cases to a release cycle, and to even run the test itself. The why’s of this could be (and probably will be) a whole post. 🙂

Bugs Will Happen

We’re human. We’ll miss something we shouldn’t have. We won’t think of something we should have. On top of that, we’ll see something we didn’t even think was a possibility. So be kind and focus on the solution rather than the bad code commit.

More Confidence, Quicker

Our QA processes are here to give us more confidence in our software as quickly as possible, so we can release features or fixes to our clients. Whether we’re adding, editing, or removing a step in our QA process, we ask ourselves if doing this will bring more confidence to our release cycle or will it speed it up. Sometimes we have to make trade-offs between the two.

Never Release the Same Bug Twice

Our QA process should be about preventing regressions on past issues just as much as it is about confirming functionality of new features. We want a robust enough process that when an issue is encountered and solved once, that same issue is never found again. In the least, this would mean we’d never have the same bug with the same root cause again. At most it would mean that we never see the same type of bug again, as a root cause could be different even though the loss in functionality is the same. An example of this last point is that if our team works through an issue where a virus scanner is preventing us from saving an attachment to disk, we should have a robust enough test that will also detect this same loss in functionality (the inability to save an attachment to disk) for any cause (for example, a change to how Outlook allows access to the attachment, or another add-in stubbing the attachment to zero-bytes for archiving purposes, etc…)

How Did We Do?

We started this journey with a handful of unit tests that were all automated in our CI environment.

Short-Term Phase

During the Short-Term phase, there was an emphasis on new commits ensuring that we had unit tests alongside them. Did we sometimes make a decision to release a feature with only manual tests because the code base didn’t lend itself to unit testability? YES! But we worked hard to always ensure we had a good reason for excluding unit tests instead of just assuming it couldn’t be done because it hadn’t before. Being flexible, while at the same time keeping your long-term goal in mind is key, and at times, challenging.

Medium-Term

This phase wasn’t made up of nearly as much test re-writing as we had intentionally set out for. We added a section to our pull requests to include links to any manual testing steps required to test the new code. This resulted in more new, manual tests being written by developers than edits to existing ones. We did notice that the quality of tests changed. It’s tempting to say, “for the better”, “or with better efficiency”, but I believe most of the change can more be attributed to an understanding that the tests were now being written for a different audience, namely Developers. They became a bit more abstract and a bit more technical. Less intuitive. They also became a bit more verbose as we get a bad taste in our mouth whenever we see a manual step that says something like, “Trigger an EnforcerFilter” with no description on which one? One that displays something to the user or just the admin? Etc….

This phase was also much shorter than we had originally thought it would be.

Long-Term

This was my favorite phase. I’m actually one of those software engineers that LOVE writing unit tests. I will attribute this to JetBrains’ ReSharper (I could write about my love of ReSharper all day) interface which gives me oh-so-satisfying green checkmarks as my tests run… I love seeing more and more green checkmarks!

We had arranged a long term OKR with Andy, which gave us three quarters in 2021 to implement automation of three of our major modules (Constructor, Enforcer, and Guardian)— with a stretch goal of getting one test working for our fourth major module, Defender. We blew this out of the water and met them all (including a beta module – Architect) in one quarter. It was addicting writing UI tests and watching the keyboard and mouse move on its own.

Wrapping it All Up

Like many software product companies large and small, Tessian started out with a manual QA department composed of technologists but not Software Engineers. Along the way, we made the decision that Software Engineers need to own the QA of the software they work on. This led us on a journey, which included closer reviews of existing tests, writing new tests, and finally automating a majority of our tests. All of this combined allows us to release our software with more confidence, more quickly.

Stay tuned for articles where we go into details about the actual automation of UI tests and get our hands dirty with some fun code.